TL;DR

We control the Fourier frequency of the learned radiance field during training, progressively adding detail to prevent few-shot artifacts — no pretrained priors needed.

Input Views

High-quality rendering from as few as 3 input images of a scene.

Generic

No pretrained modules or data-driven priors — works across any scenario.

Training

Grid-based model achieves faster reconstruction than purely MLP-based methods.

Frequency Control

Progressive curriculum prevents high-frequency artifacts and floaters.

Abstract

We present a novel approach for few-shot NeRF estimation, aimed at avoiding local artifacts and capable of efficiently reconstructing real scenes. In contrast to previous methods that rely on pre-trained modules or various data-driven priors that only work well in specific scenarios, our method is fully generic and is based on controlling the frequency of the learned signal in the Fourier domain. We observe that in NeRF learning methods, high-frequency artifacts often show up early in the optimization process, and the network struggles to correct them due to the lack of dense supervision in few-shot cases. To counter this, we introduce an explicit curriculum training procedure, which progressively adds higher frequencies throughout optimization, thus favoring global, low-frequency signals initially, and only adding details later. We represent the radiance fields using a grid-based model and introduce an efficient approach to control the frequency band of the learned signal in the Fourier domain. Therefore our method achieves faster reconstruction and better rendering quality than purely MLP-based methods. We show that our approach is general and is capable of producing high-quality results on real scenes, at a fraction of the cost of competing methods. Our method opens the door to efficient and accurate scene acquisition in the few-shot NeRF setting.

Method Overview

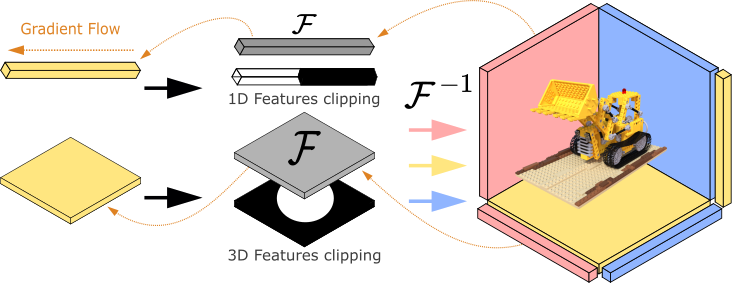

Method Architecture. Our method initializes 1D and 2D features in spatial space, projects them into the Fourier domain, and clips them with specialized filters. Projecting the clipped features back to spatial space allows us to retrieve smooth shapes.

Our method is built on two key observations. First, both strong overfitting and high-frequency artifacts typically occur early in the optimization process, and, if avoided in these early stages, they are significantly less prominent in the final result. Second, by gradually increasing the maximal Fourier frequency of the learned signal both significantly regularizes the learned NeRF, while at the same time, providing the network enough degrees of freedom to learn the fine details in the final stages of the optimization.

Progressively Integrating Complexity

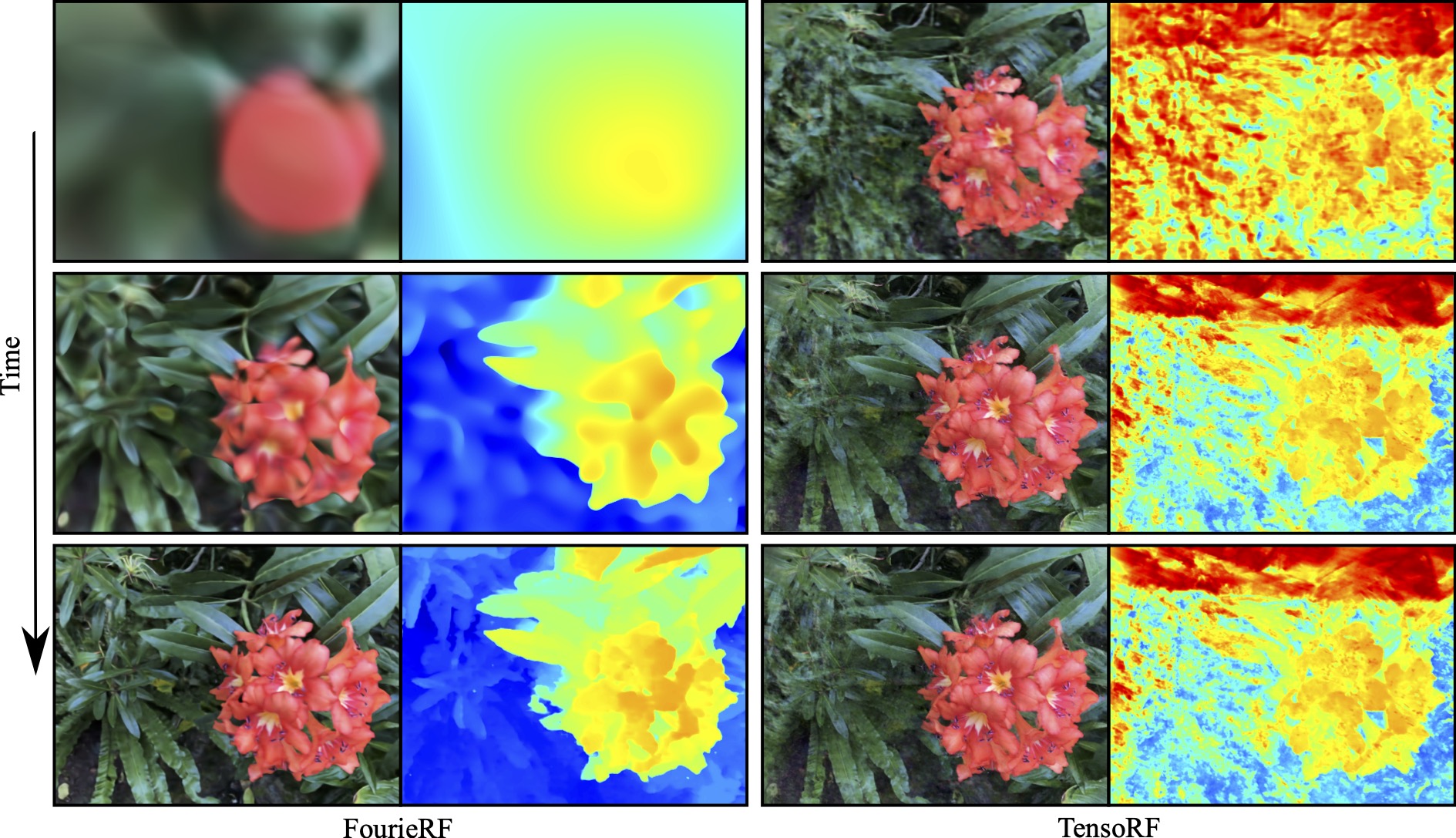

Watch how our method progressively integrates complexity during training. RGB (left) and depth prediction (right) evolve smoothly from low-frequency to high-frequency detail, avoiding the floaters and artifacts that plague standard few-shot NeRF methods.

RGB / Depth Comparison

Drag the slider to compare our method with TensoRF or ZeroRF side by side. Click to pause, then scrub the timeline.

Our method produces cleaner geometry and appearance, while baselines TensoRF and ZeroRF fill the scene with incoherent geometry and floaters.

BibTeX

@inproceedings{gomez2025fourierf,

title={FourieRF: Few-Shot NeRFs via Progressive Fourier Frequency Control},

author={Gomez, Diego and Gong, Bingchen and Ovsjanikov, Maks},

booktitle={International Conference on 3D Vision (3DV)},

year={2025}

}